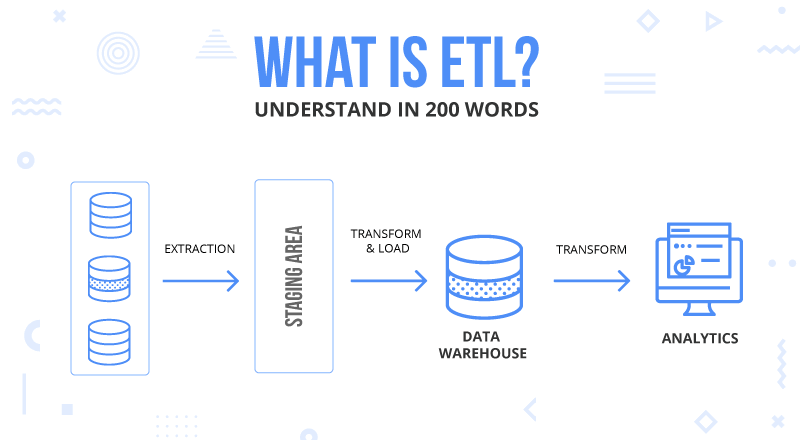

Let's break down the critical components of an ETL pipeline: At the same time, their scheduled operation keeps data up-to-date for real-time or periodic analysis, ultimately empowering analysts and data scientists to extract valuable insights. They streamline data preparation, automating tasks to ensure clean, structured data for versatile analytical tools and ETL techniques. ETL pipelines unify diverse data sources, enabling seamless analysis of structured and unstructured data types to provide a holistic view of a team’s data environment. The Significance of ETL PipelinesĪn ETL pipeline is a structured data processing framework central to data integration and analytics. Understanding the ETL pipeline is grasping the process of systematically extracting, transforming, and loading data to facilitate meaningful analysis and decision-making. Quick setup and deployment in the cloud environment Limited remote access may hinder collaborationĪccessible from anywhere with an internet connection, promoting collaborationĭeployment may take time due to hardware setup Teams responsible for software updates and maintenanceĬloud providers manage and update the ETL service Seamless integration with a wide range of cloud services Limited and may require additional middleware Manual scaling requires hardware procurementĪuto-scaling, resources can be easily adjusted as neededįixed costs (capital expenditure for hardware)Ĭloud-based storage solutions (e.g., Amazon S3, Azure Data Lake Storage) ETL pipelines integrate data from various sources into a unified format, allowing for comprehensive analysis and a holistic view of the data landscape.Ĭloud ETL offers scalability, cost flexibility, integration with cloud services, and reduced maintenance burden compared to traditional ETL.Ĭloud-based ETL infrastructure provided by vendors (e.g., AWS, Azure, GCP).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed